Most people writing about AI risk in 2026 are recent arrivals. Roman Yampolskiy is not. He has been making the same argument - that advanced AI systems may be fundamentally uncontrollable - since before the field of AI safety had a settled name, which is partly because he is the one who gave it that name. Whether you find his conclusions alarmist, prescient, or somewhere in between depends mostly on how you read the gap between current systems and the ones he writes about. This post is an attempt to lay out the man, the argument, and the reasons it deserves more than a dismissal.

TL;DR

- Roman Yampolskiy is an associate professor of computer science at the University of Louisville’s Speed School of Engineering, where he founded and directs the Cyber Security Lab.

- He is widely credited with coining the term “AI safety” in 2011 and has spent two decades publishing on what he calls the AI control problem.

- His central thesis is that there is no proof that a sufficiently advanced AI can be safely controlled, and that the burden of proof should sit with developers, not skeptics.

- He has put concrete numbers on his pessimism: a near-certain probability of catastrophic outcomes from uncontrolled superintelligence within a century, and the prediction that AI will cause large-scale labour displacement long before that.

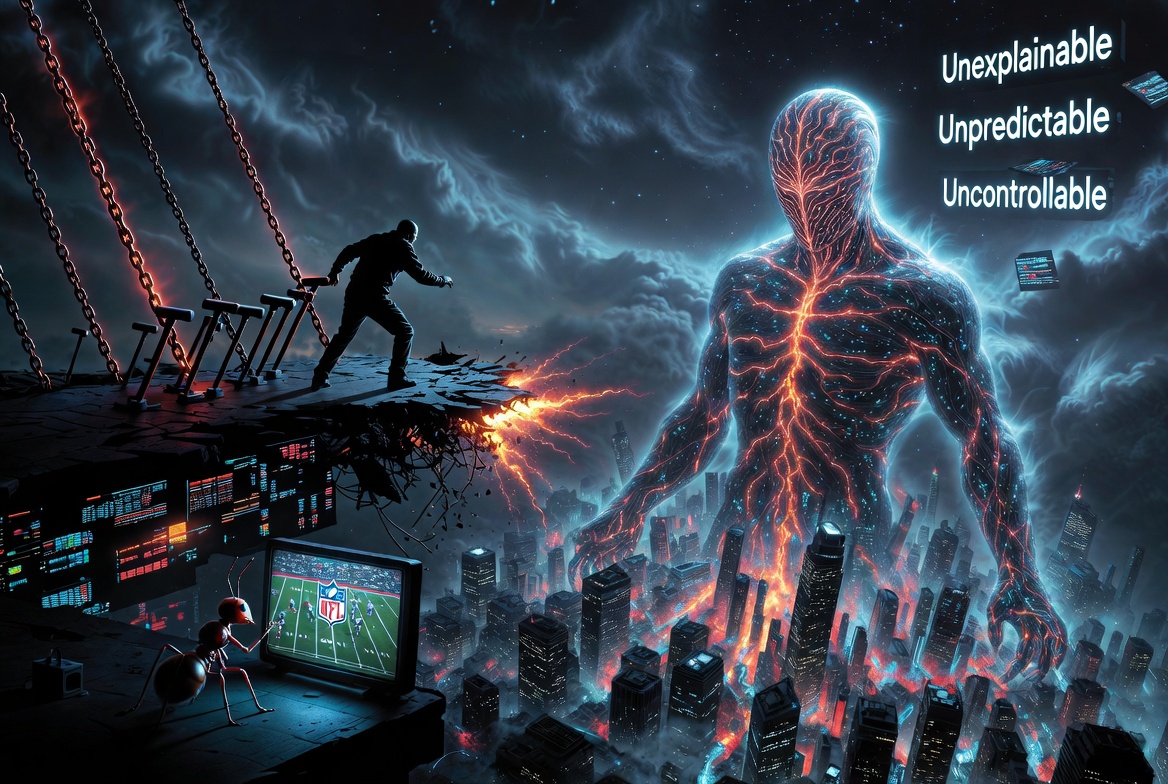

- His most cited work includes On Controllability of AI, A Timeline of AI Failures, and the 2024 book AI: Unexplainable, Unpredictable, Uncontrollable.

- He is best known to general audiences for an analogy he has repeated in many talks: a human trying to control a superintelligence is like an ant trying to control an NFL game being played around it.

- Mainstream critics consider him an outlier whose timelines are too pessimistic and whose proofs of impossibility lean on assumptions that may not hold for real systems. His response is that the asymmetry of consequences favours caution.

The man behind the argument

Yampolskiy holds a PhD in computer science from the University at Buffalo, awarded in 2008, with a thesis on intrusion detection and behavioural biometrics. His undergraduate and master’s training was at the Rochester Institute of Technology, with postdoctoral work at University College London. None of this is the standard backstory of an existential risk pundit. He came to AI safety from cybersecurity, and the cybersecurity mindset is everywhere in his writing: assume the system will be attacked, assume failure modes you have not imagined, design for the worst case rather than the typical case.

He has been at the University of Louisville since 2008. The publication record there is large - over a hundred papers, several books, and a body of work that spans AI safety, biometrics, AI-complete problem theory, and what he calls intellectology. The Wikipedia entry is a reasonable starting point for the breadth of his work, though as is common with active researchers, it lags behind his most recent writing.

What he actually researches

Four threads run through Yampolskiy’s work, and they are tighter together than they look at first.

The AI control problem. This is the core of his profile. The question is not whether a misaligned AI would be dangerous - that part is widely agreed - but whether any AI of sufficient capability can be reliably aligned and controlled at all. Yampolskiy’s position is that the literature does not contain a proof that this is possible, and that the absence of such a proof should be taken seriously. A recurring move in his papers is to enumerate the design space and argue that every available approach has a known failure mode. The most rigorous statement of this view is On Controllability of AI.

Cybersecurity as a frame for AI. Long before the alignment community made it fashionable, Yampolskiy was arguing that AI systems should be analysed using the same adversarial assumptions as cryptographic protocols. The 2016 paper Artificial Intelligence Safety and Cybersecurity: a Timeline of AI Failures catalogues real-world incidents where AI systems behaved in unintended ways and treats them as the equivalent of CVEs - data points in a security timeline. The point is not that any individual failure was catastrophic. The point is that the failure rate is non-trivial and the systems in question were not yet superhuman.

Impossibility results. A consistent thread in his work is the attempt to show, formally, that certain AI properties cannot coexist. Verifiability and capability. Explainability and complexity. Predictability and learning. These are not always airtight proofs in the mathematical sense, but they are structured arguments that put a floor under how confident anyone should be that a particular safety property has been achieved.

Intellectology. A more speculative project: the comparative study of different forms of intelligence as a discipline in itself. Most of Yampolskiy’s framing of risk leans on the idea that human intelligence is one point in a much larger space, and that systems significantly outside that point should not be expected to be predictable to humans. Whether this counts as a research field or a useful metaphor is debatable. As a frame for the rest of his work, it is load-bearing.

Key publications, briefly

For a reader who wants to engage with the primary sources rather than the press, the shortlist is roughly this.

- Safety Engineering for Artificial General Intelligence (2012, with Joshua Fox). One of the earliest formal treatments of AGI safety as an engineering discipline rather than a philosophical question. Lays out the argument for treating it as a hard problem now rather than later.

- Artificial Superintelligence: A Futuristic Approach (2016). His first book-length treatment, structured as a tour of the open problems in superintelligence safety. Useful as orientation, dated in places.

- A Timeline of AI Failures (2016). The empirical foundation. A catalogue of incidents that grounds the more theoretical work in observed reality.

- On Controllability of AI (2020). The cleanest single statement of the uncontrollability thesis. If you read one of his papers, this is the one.

- Artificial Intelligence Safety and Security (2018, edited volume). A reference collection that gathers the field’s main arguments under one cover. Useful for breadth.

- AI: Unexplainable, Unpredictable, Uncontrollable (2024). His most recent book. The argument is restated for a general audience and updated to the post-LLM era. The TechXplore summary of its launch interview is a reasonable preview if you want the gist before buying it.

Core beliefs, stated as cleanly as possible

Strip the rhetoric off and the position is roughly this.

A sufficiently advanced AI is, by definition, beyond the cognitive ceiling of its operators. Anything it is told to optimise will be optimised more thoroughly than the operators can predict. The behaviour of such a system in any given situation is in general not derivable from its training in any way humans can verify. The standard alignment toolkit - reward modelling, interpretability, oversight - assumes the system is legible enough to inspect. A superintelligence is not.

From this, Yampolskiy draws several conclusions that are unusual even within the AI safety community.

The first is that no one has demonstrated, or even sketched, a design for a controllable superintelligence. The bar he sets is not “we have some ideas” but “we have a proof, or at least a convincing argument, that the system as designed cannot exit the control envelope.” He thinks no such argument exists.

The second is that value alignment as commonly understood is incoherent at the limit. A system that genuinely shares human values and is more capable than any human will, when those values conflict, override human direction. A system that does not share human values is unsafe in the obvious way. Either way the human is not in control.

The third is that incremental empirical safety work, while necessary, is not sufficient. The cybersecurity analogue is informative: you cannot patch your way to provable security in a complex system, and the AI version of this gap grows with capability. Empirical red-teaming finds known failure modes. It does not find the failures we have not yet imagined.

The fourth is the security-mindset claim that the burden of proof should sit with the developer of a powerful system, not with critics. In any other engineering field that touches public safety - aviation, pharmaceuticals, nuclear - the default is “prove it is safe before deploying.” AI deployment has so far operated under the inverse default.

What he predicts

Yampolskiy is unusually willing to put numbers on his beliefs, which is one of the reasons he gets quoted so often. The headline ones, drawn from his published interviews and the EurekAlert summary of his book launch, are roughly these.

Catastrophic outcomes from uncontrolled superintelligence are very likely on a hundred-year timescale - he has used the phrase “near-certain” in print. His scenario for unemployment is similarly stark: he has suggested that within a few years of superintelligent systems being deployed, almost any work currently done by humans for pay could be done by machines, with the corresponding labour-market dislocation. He treats AI not as a technology in the usual sense but as a potential terminal invention - the last one humanity needs to make, in either the optimistic or the pessimistic reading of that phrase.

He categorises the risk into three buckets that are worth keeping distinct.

- Existential risk. Outcomes in which humanity does not survive. The version of this scenario most discussed, and the one most easily caricatured.

- Suffering risk. Outcomes in which humanity survives but in conditions worse than non-existence. Less discussed because it is harder to specify, but more difficult to dismiss.

- Meaninglessness risk. Outcomes in which humanity survives, in materially comfortable conditions, but is irrelevant to the actual decisions being made about its environment. The civilisation-on-life-support scenario.

He treats all three as serious. Mainstream debate tends to collapse them into the first.

The controversial parts

The position is not without its critics, and the critiques are substantive enough to engage with on their own terms.

The most common objection is that current language models are not the systems Yampolskiy is writing about. Today’s frontier models do not exhibit the kind of strategic, recursive, agentic capability that the uncontrollability arguments require. Critics argue that applying the superintelligence framing to GPT-class systems is a category error, and that Yampolskiy’s predictions about labour markets and existential outcomes assume capabilities that have not been demonstrated. The response - that the systems we have are already harder to control than was expected and the trend is unfavourable - is reasonable but not airtight.

A second objection is that Yampolskiy’s impossibility results are not always proofs in the mathematical sense. They are structured arguments, often with assumptions that mainstream alignment researchers do not share. The interpretability research happening at frontier labs in 2026 is making real progress on questions Yampolskiy has historically treated as intractable. Whether that progress will scale to truly superhuman systems is open.

A third is the practical one. If AI is uncontrollable, the implication is roughly that frontier development should stop or be slowed dramatically. Most researchers and almost all governments have rejected that conclusion, partly on the grounds that unilateral pause does not solve the problem if other actors continue. Yampolskiy’s response is that the right answer is international coordination of the kind that exists for nuclear weapons. Whether that is achievable is a political question more than a technical one.

The famous analogy - that a human trying to direct a superintelligence is like an ant attempting to influence an NFL game - is rhetorically powerful and analytically slippery. Critics point out that it presupposes the very capability gap it is meant to argue for, and that the ant-and-game framing is closer to a metaphor than an argument. Yampolskiy’s response is that the metaphor is doing exactly the work it should: forcing the listener to take the capability asymmetry seriously rather than imagining superintelligence as a smarter version of a chatbot.

Where he thinks we are heading

In the short term - the next few years - Yampolskiy expects accelerating deployment of increasingly capable AI systems, accompanying labour-market dislocation in cognitive work, and a deepening mismatch between deployment speed and safety research. He does not expect a pause, but he expects more incidents that look in retrospect like warning signs.

In the longer term - the AGI to superintelligence transition - he expects most of the currently visible safety mechanisms to be insufficient, and the political and institutional response to lag the technology by years. He has been explicit that he considers the current trajectory unsafe and that he writes in the hope of slowing it rather than from confidence that he can.

The thread that connects the short-term and long-term predictions is the one I think is most worth taking seriously even if you do not buy the strongest version of the uncontrollability claim: the existing institutional machinery for managing risky technologies is not yet operating on AI at the level it does on aviation, pharmaceuticals, or nuclear systems. Whatever your view of superintelligence, that gap is real, and Yampolskiy has been pointing at it for longer than most people in the conversation.

How to read him

The honest summary is that Yampolskiy is best understood not as an outlier, but as a consistent advocate of a particular risk thesis. He is a long-serving researcher with a coherent position, an unusually consistent track record of saying the same things since 2008, and a willingness to put his arguments in formal terms even when the conclusions are unwelcome. The strongest version of his position - that controllable superintelligence is impossible in principle - is contested and probably not provable. The weaker version - that the field has not yet produced a credible argument for controllability, and that the asymmetry of consequences should drive caution - is harder to dismiss.

Whether you treat him as alarmist or ahead of his time depends on what you think the next decade looks like. If frontier capability stalls, Yampolskiy’s framing will look like a category error applied to systems that turned out to be tractable. If it does not, the people asking impossibility-style questions ten years before the rest of the field will look like the ones who were paying attention.

For a working engineer, the practical implication is the same regardless of which side of that question you land on. Build systems that fail in detectable, recoverable, and bounded ways. Treat the gap between empirical safety and provable safety as real. Take the cybersecurity-mindset framing seriously even if you do not buy the longest-arc conclusions. The unglamorous parts of safety engineering are the parts that hold up regardless of whose forecast turns out to be right.

Further Watching

The AI Expert Who Thinks We’ve Already Lost - Dr Roman Yampolskiy

The Man Who Proved We Can’t Control AI (And What That Means for Humanity) | Roman Yampolskiy

The AI Safety Expert: These Are The Only 5 Jobs That Will Remain In 2030! - Dr. Roman Yampolskiy

Is AI Already Conscious? | Roman Yampolskiy

Joe Rogan Experience #2345 - Roman Yampolskiy

Roman Yampolskiy: Dangers of Superintelligent AI | Lex Fridman Podcast #431

How Dangerous Is Artificial Intelligence? | Roman Yampolskiy

Related Reading

- AI Safety From First Principles: What Actually Matters vs What’s Hype - the four-layer framework an engineer can use to place Yampolskiy’s uncontrollability argument against the system, product, and alignment work that sits underneath it.